The Theory#

I’ve been coding a lot with AI agents lately. Claude Code, Cursor, Copilot, the usual suspects. And one question kept bugging me:

If I wrote comments simple enough for a second-year CS student to follow, would the AI do better at poking around and editing the codebase?

It seemed obvious on the face of it. Models train on code. Comments come along for the ride. If those comments spell things out in plain English, the agent has more to chew on. Inline onboarding docs, basically.

But I didn’t want to just go with my gut. Has anyone actually tested this? Or are we all just making it up?

Six papers, three tool vendors, and a bunch of Hacker News threads later, here’s where I landed.

The Short Answer#

Sort of yes, with one big asterisk. LLMs clearly do read comments, and they trust them a lot. Training on more commented code produces better code models, no question. But once the AI is actually sitting in your project doing work? The picture gets blurry fast. And weirdly, the exact scenario I was asking about has never been directly tested.

What the Academic Research Shows#

LLMs Follow Comments, For Better or Worse#

Worth being careful with the framing here. The strongest studies don’t show that correct comments help models reason about code. They show that misleading comments hurt, a lot. That’s a narrower finding, and it’s the one the evidence actually supports: LLMs weight comments heavily enough that bad ones pull performance down hard. Whether good ones pull it up in the same direction is a separate question, and the inference-time evidence on that is much thinner (see below).

CodeCrash (NeurIPS 2025) fed misleading comments to 17 LLMs on code reasoning tasks. The result? A 23.2% average drop in performance. Even Chain-of-Thought couldn’t fully rescue them, a 13.8% drop stuck around. The models weren’t skimming. They were trusting comments over the actual code sitting right next to them.

Haroon et al. (Virginia Tech/CMU, 2025) ran 600,000 debugging tasks over 196 million lines of code. Misleading comments dragged fault detection down to 24.55%. When they applied semantic-preserving mutations, models failed to re-find bugs they had previously spotted 78% of the time.

Bottom line: both studies tested adversarial or misleading comments. They demonstrate the downside risk when comments are wrong, not a symmetric upside when comments are right. That’s an important asymmetry to keep in mind as you read the rest of this post.

More Comments = Better Models (In Training)#

The strongest case for comments comes from the training side. Song et al. (ACL 2024) padded training data with generated comments, pushing comment density from 21.87% up to 38.23%. The numbers were kind of wild:

- Llama 2-7b: 40% relative improvement on HumanEval, 54% on MBPP

- Code Llama-7b: 25% relative improvement

- InternLM2-7b: 24% relative improvement

Models trained on heavily commented code beat the models trained on bare code. The paper’s abstract also notes the augmented-data model “outperformed both the model used for generating comments and the model further trained on the data without augmentation,” which is the part that made me do a double take.

One caveat the post itself needs to flag up front: this is a training-time result, measured on HumanEval and MBPP — single-function benchmarks. It tells you that feeding an LLM more commented code during pretraining produces a better model. It does not tell you that sprinkling more comments into your codebase will get better responses out of an already-trained AI working on your project. Those are different mechanisms, and the inference-time evidence in the next section is much less rosy about the second one. Easy to conflate. Try not to.

But At Inference Time? It’s Complicated.#

This is where my nice tidy theory hit a wall.

Macke & Doyle (MITRE, NAACL 2024) ran GPT-3.5 and GPT-4 through HumanEval with different documentation variations. Correct comments didn’t meaningfully improve task success versus a no-docs baseline. Code coverage in generated tests got a small lift, fine. But the thing you actually care about, did the AI finish the task, was basically a wash.

The catch, and it’s a big one: wrong or random comments absolutely wrecked performance. GPT-3.5 cratered to 22.1% success. GPT-4 dropped to 68.1%. Bad comments hurt much, much more than no comments at all.

The Gap Nobody Has Filled#

Here’s the part that caught me off guard: nobody has actually tested the thing I was asking about.

Every study out there runs on isolated functions. HumanEval. MBPP. Little self-contained snippets. But an agent crawling through a real multi-file codebase, reading architectural comments to figure out how the pieces connect, hopping across 29-plus files and 5-plus directories like Microsoft’s Code Researcher paper describes for actual debugging work? That hasn’t been studied.

The benchmarks are asking whether comments help an AI finish def fibonacci(n):. I’m asking whether comments help an AI figure out that your auth middleware hits the token service, which calls the user store, which has a cache that flushes on profile updates. Those are not the same question.

What Practitioners Are Doing#

While the academics are prodding at isolated functions, folks in the trenches have been figuring out their own answers. And what they’ve landed on rhymes with my theory, just broader.

“Treat Your Codebase Like Onboarding Docs”#

A recurring idea in developer communities is that AI agents do better when a codebase is structured the way you’d onboard a new human — design docs available, build and test commands clear, code organized for readability rather than cleverness. I can’t point at a specific study backing that intuition, but it sits close enough to educational-style comments that it’s worth naming. The community has mostly settled on a different delivery mechanism for that kind of guidance, though, which is where we turn next.

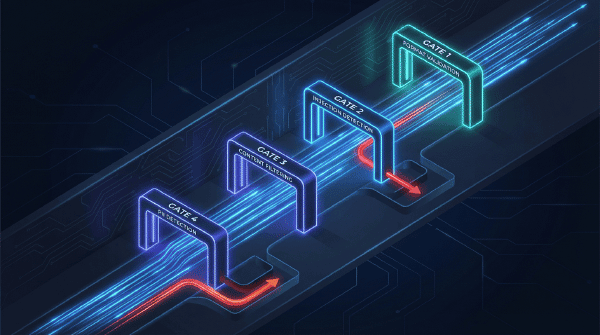

Context Files: Common Practice, Contested Evidence#

Every major AI coding tool now ships a dedicated way to feed the model project context:

| Tool | Mechanism | What Goes In It |

|---|---|---|

| Claude Code | CLAUDE.md | Project instructions, coding standards, architecture |

| GitHub Copilot | .github/copilot-instructions.md | Repository-specific guidance |

| Cursor | .cursor/rules | Project-specific AI behavior rules |

| Documentation | llms.txt | AI-friendly docs index |

The practice is near-universal. Whether it actually helps is where things get interesting.

The Counter-Evidence#

Right as I was writing this up, Chen et al. (2026, arXiv:2602.11988) dropped a study that goes straight at the assumption. They evaluated AGENTS.md-style context files (the same category as CLAUDE.md) across multiple agents and LLMs on real coding tasks, and found:

- Context files tended to reduce task success rates compared to giving the agent no repo context at all.

- Inference cost went up by over 20%.

- Both human-written and auto-generated context files showed the same pattern.

- Agents did follow the instructions — they just ended up doing more exploration, testing, and file traversal than helped them finish.

Their recommendation isn’t “stop using them.” It’s more surgical: “human-written context files should describe only minimal requirements.” Padding these files with architecture essays, style preferences, and defensive instructions appears to actively hurt.

That finding maps onto a pattern you can see in the earlier comment-research too. Models are obedient readers. If you tell them to explore ten directories before editing a line, they’ll do it. If you tell them the wrong thing, they’ll do that too. The fix is fewer, sharper instructions — not more.

So the honest version of the advice is: context files are worth using, but keep them lean. Architecture and conventions, yes. Style nitpicks and speculative warnings, probably not. The more you write in one, the more likely you are to tax the agent’s attention for no gain.

“Why” Comments Beat “What” Comments#

When devs do make a case for inline comments and AI, they almost always land on explaining why, not what. The reasoning is straightforward: the AI can read the code and see what it does, but it can’t figure out why you did it that way. Constraints, workarounds, quiet business rules, and deliberate deviations from a standard pattern all live in that gap. Documenting them helps the AI avoid “correcting” code that is intentionally different. This is editorial wisdom from the community rather than a lab-validated finding, so take it in that spirit — but the failure mode it protects against (the AI helpfully rewriting your intentional workaround) is real enough that most practitioners I’ve read consider it cheap insurance.

The Evidence Table#

| Claim | Evidence Level | Verdict |

|---|---|---|

| Misleading comments degrade LLM reasoning | Strong (CodeCrash, Haroon et al.) | Confirmed |

| Comment-augmented training data improves code LLMs (on HumanEval / MBPP) | Strong (Song et al., 25–54% relative gains) | Confirmed for training, does not generalize to inference on your codebase |

| Correct comments improve inference-time task success | Weak/Mixed (Macke & Doyle) | Unconfirmed |

| Incorrect comments harm LLM performance | Very Strong (23–78% degradation) | Confirmed |

| Educational-level comments help agent codebase exploration | No direct evidence | Gap in literature |

| Comment quality matters more than comment presence | Strong (multiple papers) | Confirmed |

Repo-level context files (CLAUDE.md, AGENTS.md) improve agent task success | Against — Chen et al., arXiv:2602.11988, >20% cost increase and reduced success | Contradicted; minimal-requirements approach recommended |

What This Means If You’re Using AI Coding Tools#

1. Accuracy Over Volume#

The most consistent finding across the whole pile of research: wrong comments are way worse than no comments. So if you’re going to write beginner-friendly comments for your AI, they have to be right, and they have to stay right as the code moves. A stale comment describing last month’s architecture is actively dangerous. The AI will walk it straight off a cliff.

2. Use the Context File — Sparingly#

Whatever tool you’re using has a structured context file: CLAUDE.md, .cursor/rules, .github/copilot-instructions.md, or similar. The intuitive advice is “use it.” The more careful advice, after Chen et al. (arXiv:2602.11988), is: use it, but keep it short. That paper found these files often reduce task success and drive up cost by 20%+ when they’re padded out. Their recommendation was to “describe only minimal requirements.” So the rule of thumb I’d offer is: put the things an agent genuinely cannot infer from the code — architecture overview, nonstandard conventions, key constraints — and resist the temptation to fill it with style preferences and speculative warnings. Every line in that file is a tax on every task.

3. Comment the “Why”, Not the “What”#

When you do reach for an inline comment, point it at:

- Why you picked this approach over the obvious alternative

- What constraints or business rules forced the decision

- Where the gotchas are that an AI might stumble into

- How modules connect at their edges

Skip the // increment counter stuff. The AI can read counter++ just fine. So can a human.

4. The Hypothesis Is Testable#

If somebody wanted to actually settle this, and I really do think somebody should, here’s roughly the study that would do it:

- Take a real multi-file codebase

- Build three versions: no comments, minimal expert-style comments, educational comments

- Point an AI agent at the same tasks in all three

- Measure: task completion, code quality, time to done, how many files it had to explore

Control for comment accuracy (keep them clean) and task complexity (simple edits vs. multi-file architectural changes).

Training-time evidence says comments help models learn. Inference-time evidence on toy benchmarks says they barely move the needle. The real multi-file agent case? Still a blank spot on the map. That’s the study I want to see.

The Refined Theory#

After sitting with all of this for a while, here’s where I shook out:

Accurate, beginner-friendly comments that explain architectural intent and cross-module relationships probably help AI agents work on multi-file codebases, while wrong or stale comments will pull them below a no-comment baseline.

The “probably” is doing a lot of heavy lifting. I believe it, based on the surrounding evidence. LLMs lean hard on comments, heavily commented training data makes better models, and practitioners keep converging on “write for clarity.” But nobody has actually proven it for the specific case of an AI agent exploring and editing a real codebase. Not yet.

In the meantime, I’m writing lean CLAUDE.md files (a position I’ve updated on after reading Chen et al.), commenting the “why” and skipping the “what,” and keeping whatever I do write accurate. That much the evidence backs — with the honest caveat that “accurate and minimal” is a much more defensible recommendation right now than “write lots of educational comments everywhere.”

Sources#

Academic Papers:

- Code Needs Comments: Enhancing Code LLMs with Comment Augmentation – ACL 2024

- Testing the Effect of Code Documentation on LLM Code Understanding – NAACL 2024

- CodeCrash: Exposing LLM Fragility to Misleading NL in Code Reasoning – NeurIPS 2025

- How Accurately Do LLMs Understand Code? – Virginia Tech/CMU 2025

- Do Context Files Help Coding Agents? – Chen et al., 2026 (arXiv:2602.11988)

Practitioner Sources:

- Claude Code Documentation

- GitHub Copilot Custom Instructions

- llms.txt Standard

- Code Researcher: Deep Research Agent – Microsoft Research

Further Reading (surfaced during research, not directly cited):